Every conversation about synthetic research eventually arrives at the same question. Can it replace real people?

It's a reasonable-sounding question. It's also the wrong one, and answering it the way it's asked leads to bad decisions on both sides.

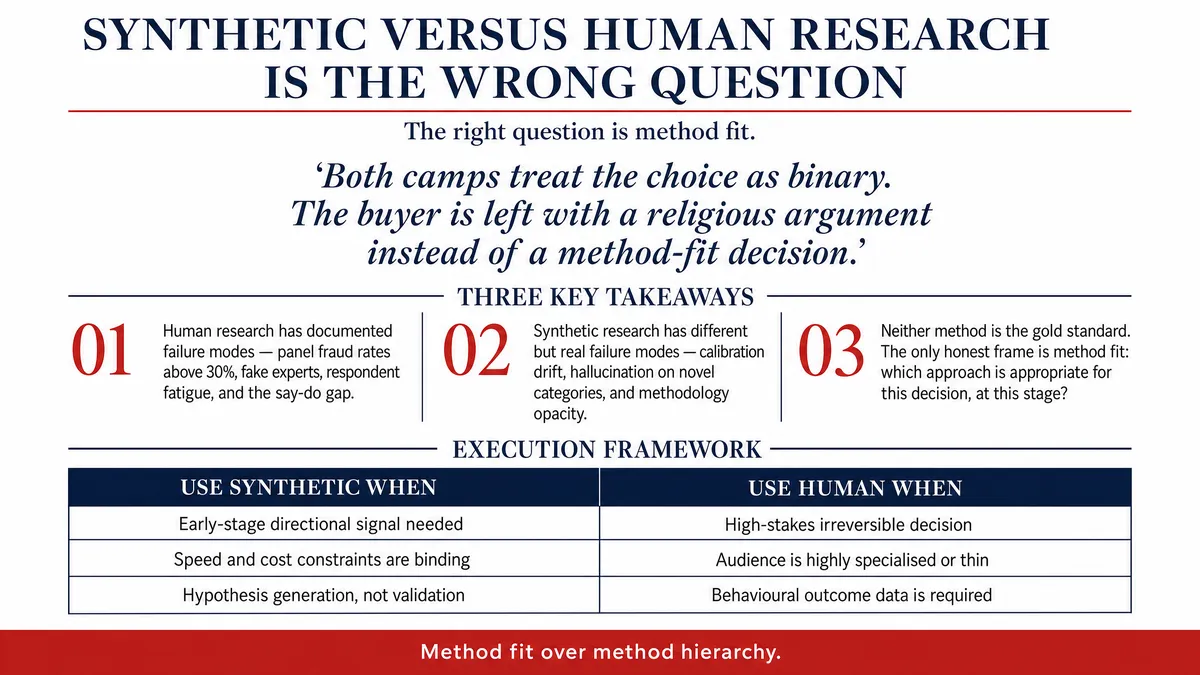

Vendors who say yes, we can replace human research are overclaiming, and serious buyers smell it. Traditional researchers who say no, nothing replaces real respondents are protecting a status quo that has its own well-documented failure modes. Both camps treat the choice as binary. The buyer is left with a religious argument instead of a method-fit decision.

Method fit is the only frame that pays off. Not which is better, but which is appropriate for this decision, at this stage, with this confidence requirement.

Traditional research has failure modes too

The synthetic-versus-human debate usually frames human research as the gold standard against which synthetic must prove itself. The framing is comforting to incumbents. It's also wrong.

Human research has its own well-cataloged failure modes, and any honest method-fit conversation has to put them on the table.

Panel fraud. Professional respondents and bots have been a known industry problem for years. Some panels report fraud rates north of 30 percent before quality controls. Even after controls, the residual is non-trivial.

Fake experts. B2B and expert-network research is structurally vulnerable to misrepresentation. The economic incentive to claim seniority you don't have is real, and verification is expensive.

Respondent fatigue. Panel members get tired. Tired respondents straight-line, satisfice, and skip. The data still comes back, but the signal-to-noise ratio degrades quietly.

Recruitment selection bias. The people who respond to recruitment incentives aren't the people you thought you were sampling. They're the people who respond to recruitment incentives. The gap between the two rarely gets audited.

Social-desirability bias. Respondents say what sounds good. The say-do gap, the difference between what people tell a researcher and what they actually do, is one of the most stable findings in behavioural science. Traditional research captures the said. The done is harder.

None of this means human research is broken. It means human research is a method, like any other, with strengths, weaknesses, and failure modes. Pretending otherwise sets the bar in the wrong place.

Synthetic research has failure modes too

The same honesty has to run the other way.

Synthetic research isn't a black box that returns truth. It's a model of an audience, and a model is only as good as the data behind it and the discipline applied to it. The failure modes are different, but they're real.

Calibration drift. A synthetic population built on stale demographic or behavioural data starts to lag reality. Without active recalibration, the model degrades the way any model does.

Out-of-distribution questions. Ask a synthetic population about something the underlying data can't inform, like a brand-new product category or a behaviour that didn't exist when the data was collected, and the model will hallucinate plausibly.

Population thinness. Highly specialised audiences, very small populations, or markets with weak underlying data aren't yet a strong fit. Pretending they are is a fast way to lose credibility.

Methodology opacity. A synthetic study where the buyer can't explain how the audience was constructed isn't defensible internally. The method has to travel.

A serious synthetic vendor will tell you those things up front. A vendor who promises 100 percent fidelity to traditional research across every audience type and every question is selling the same thing the panel-fraud problem is selling: a story that sounds good until you look closely.

What method fit actually looks like

Once both methods are on the table with their failure modes, the conversation gets practical. Not which is better, but which fits this decision.

A few patterns recur often enough to be useful as defaults.

When the decision is fast and the audience is hard to reach, synthetic earns its keep. The alternative is no research, or research that lands after the decision is made. Pressure-testing a concept against synthetic cardiac-rehab specialists in week one beats commissioning panel work that arrives in week ten.

When the decision is high-stakes and the audience is reachable, human research holds. Regulatory submissions, legal exposure, behavioural validation against real-world outcomes: the cost of being wrong is high enough that the slower, more expensive method earns its premium.

When the decision is iterative, both belong in the same workflow. Synthetic for the early-stage exploration where you're sharpening hypotheses and killing weak ideas. Human for the validation pass on the survivors. The cost curve and the speed curve stack correctly.

When the audience is genuinely novel, neither method is comfortable. Synthetic models lag novelty. Human research can capture it but takes time. The honest answer is to use both, sceptically, and triangulate.

Synthetic and human research aren't interchangeable. They're different tools. Tool choice is a method-fit decision driven by the question, not a category war driven by vendor preference.

How to evaluate either method

A practical filter, useful in either direction: ask three questions of any research approach before commissioning it.

What does this method tell me, and what doesn't it? Be specific. It tells me stated preference, not behavioural outcome. Or it tells me general-population sentiment, not regulated-clinician judgement. A method that can't answer this question clearly is one you can't defend.

What is the failure mode, and how would I know it had happened? Every method has one. Panel fraud. Calibration drift. Out-of-distribution hallucination. Recruitment selection. If the vendor can't describe the failure mode, the vendor hasn't thought about it.

What is the next step if the answer is unexpected? Research with no follow-up plan isn't going to inform a decision. Synthetic research earns its place when it sequences into deeper work, kills a bad idea, or unlocks a budget conversation. Human research earns its place when it validates a hypothesis sharp enough to be worth validating. Either way, the question is what do we do with the answer?

A method that survives those three questions is a method worth using. The label matters less than the discipline behind it.

The honest position

Synthetic research is a real method with real value. Buyers who treat it as either a miracle or a fraud will both be wrong. Buyers who treat it as a tool, appropriate for some decisions and inappropriate for others, complementary to human research more often than competitive with it, will get the most out of it.

Synthetic versus human research is the wrong question.

The right question: which method gives me a defensible answer to this decision, at this stage, with this level of confidence required, on this timeline?

Answer that, and the technology question takes care of itself.