The first synthetic-research pilot is the most important one a team will run, and the easiest one to get wrong.

Get it wrong, and the team walks away with a slick demo and no internal champion. The pilot proves the technology works in principle but fails to anchor a real decision. Six months later, no one can remember what the pilot was for, and the budget conversation never starts.

Get it right, and the pilot becomes the reason the next ten projects get commissioned. Not because the methodology was novel, but because it changed what got built, bought, or believed.

The difference between the two outcomes isn't the vendor. It's the design of the pilot.

The first pilot isn't a software trial

Most enterprise software pilots are designed to evaluate the tool. Does it integrate with our systems? Does the UI work for our team? Can we get seats provisioned by Q3? Reasonable questions for most software categories.

For synthetic research, they're the wrong questions to lead with.

The first synthetic pilot isn't a software trial. It's a method trial wrapped around a real decision. The team isn't asking do the seats work? The team is asking can this method give us a defensible answer to a question we currently can't answer fast enough?

That reframing matters. It changes what gets scoped, what success looks like, and what the next step is when the pilot ends. Get it right at the brief stage and the rest follows. Get it wrong and the pilot ends up as a clever demo nobody can build a budget case around.

Three kinds of confidence to prove

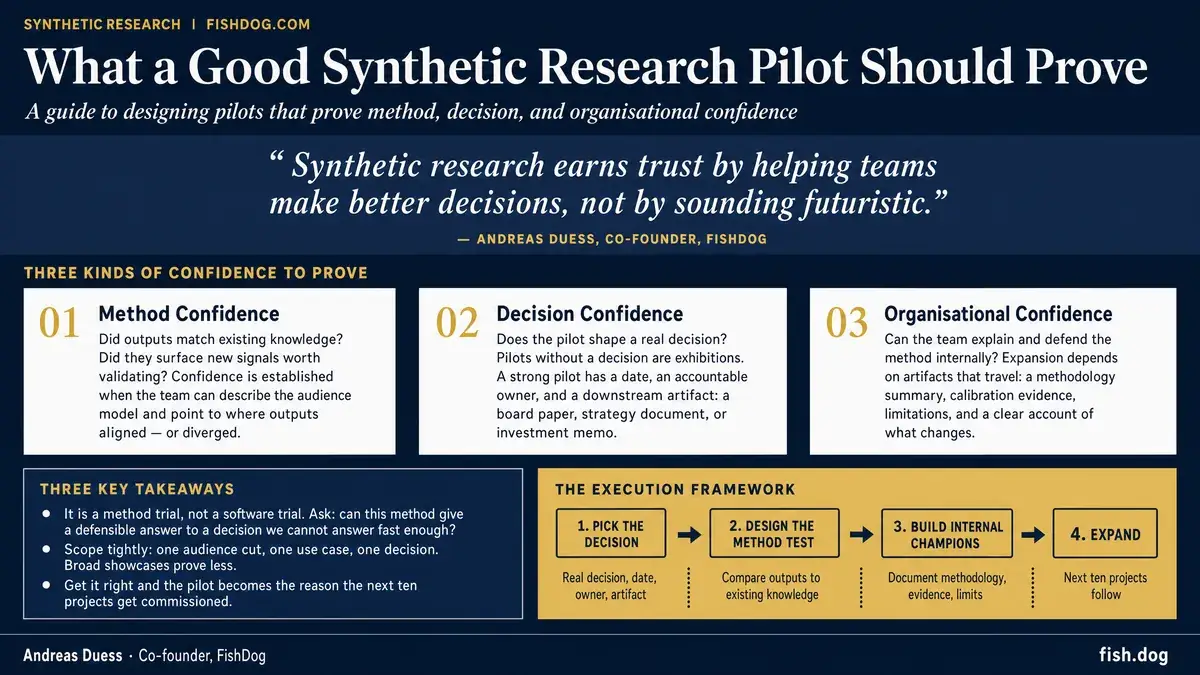

A good pilot proves three things, in order. Skip any of them and the pilot doesn't graduate to a budget conversation.

1. Method confidence

Does this method produce outputs we can trust enough to act on?

Method confidence is the one most teams focus on, and the one most pilots do answer. The audience is defined. The questions are run. The outputs come back. The team reads them and forms a view on whether the method behaved sensibly.

The trap is treating method confidence as a quality test in the abstract. Did the AI produce good answers? is too vague to be useful. The better test is comparative. Did the synthetic outputs match what we already know about the audience from prior research, where we can check? And: did the synthetic outputs surface anything we didn't already know, in places we now want to verify?

Method confidence is established when the team can describe the audience model, point to where the outputs aligned with prior knowledge, and identify the new signals that warrant follow-up.

It isn't established when the only takeaway is the demo was impressive. Demos aren't pilots.

2. Decision confidence

Does this method help us make or shape a real decision?

Decision confidence is the one most pilots fail on, because most pilots aren't pinned to a real decision in the first place. Pilots without a decision are exhibitions. They prove the method runs without proving the method matters.

A pilot pinned to a decision looks different from the start. There's a date. There's a person whose job depends on getting the call right. There's a downstream artifact, a strategy doc or a board paper or an investment memo or a campaign brief, that the pilot output has to feed.

The pilot doesn't have to make the decision. It has to materially shape it. The bar: did the synthetic outputs change the conversation around the decision? Did they kill a bad option early? Did they sharpen a hypothesis worth validating with primary research? Did they surface an audience tension the team hadn't seen?

Decision confidence is what turns the pilot from a methodology exercise into a budget case. Without it, the next conversation is that was interesting. With it, the next conversation is what else can we point this at?

3. Organisational confidence

Can the buyer explain and defend the approach internally?

Organisational confidence is the one almost no vendor talks about, and the one that determines whether the pilot expands or dies on the vine.

The buyer who commissioned the pilot has to explain it to people who weren't in the room. The skeptical research head. The procurement lead. The CFO asking why this line item is appearing. The peer who has heard horror stories about AI-generated nonsense and wants to know how this is different.

If the buyer can't explain three things, the pilot stalls: how the audience was built, what the method can and can't tell them, and what the failure modes are. The work was good. The internal story isn't transportable. The next round of budget never happens.

Organisational confidence is built deliberately. The pilot has to produce artifacts the buyer can take into a room: a methodology summary they can defend, calibration evidence they understand, an honest list of what the method does poorly, a clean account of where the outputs were verified and where they weren't.

Vendors who skip this step are setting their own pilots up to fail post-readout. The pilot worked. The buyer can't sell it. The expansion never happens.

What that looks like in practice

A pilot designed around the three-confidence frame has a different shape from a pilot designed around the technology.

It starts with a real decision the buyer is about to make, with a date and a downstream artifact. Not let's see what synthetic research can do, but we have to commit to a market-entry call by end of quarter, and we can't reach the audience in time to do the primary research.

It scopes one audience cut, sharply defined. Not three personas across two markets. The audience for this decision, in this market, with these specifying characteristics. A pilot that tries to model everything proves nothing.

It includes a benchmark layer the team can sanity-check against: prior research, internal sales data, qualitative interviews already in hand, public benchmarks. Anything that lets the team check the synthetic outputs against something they already trust. Calibration beats self-report.

It produces three artifacts at the end, not one. The readout: what the synthetic research said. The methodology summary: how it was done, including limitations. The decision frame: what the team is going to do differently because of it.

A pilot built that way takes weeks, not months, and ends with a buyer who can stand up in a room and explain why they want to do it again. That's the only definition of pilot success that matters.

Where to start

Don't start with seats. Start with one audience, one use case, and one decision.

The temptation is to scope big. If this works, we want it across all of insights, all of strategy, all of product. The instinct is right, the sequencing is wrong. A pilot scoped that way proves nothing in particular.

A narrowly scoped pilot, one audience, one decision, one date, proves the three things that matter, and earns the right to scale. The first pilot isn't the deployment. It's the qualifying round for the deployment.

The first pilot should answer a real question, not demonstrate a novelty. Synthetic research earns trust by helping teams make better decisions, not by sounding futuristic.

Pick the decision first. The rest of the pilot follows from there.