Walk into the average enterprise insights team and ask which dashboards they rely on. You'll get a polite answer about the three or four they pretend to use.

Then ask which dashboards they actually open in a normal week. Same team, different answer. The one our agency built. Sometimes. When we remember.

Most research dashboards are write-only. The data goes in. The dashboard renders. Nobody checks it unless a meeting forces them to. The interface is fine. The integration into the actual decision-making rhythm isn't there.

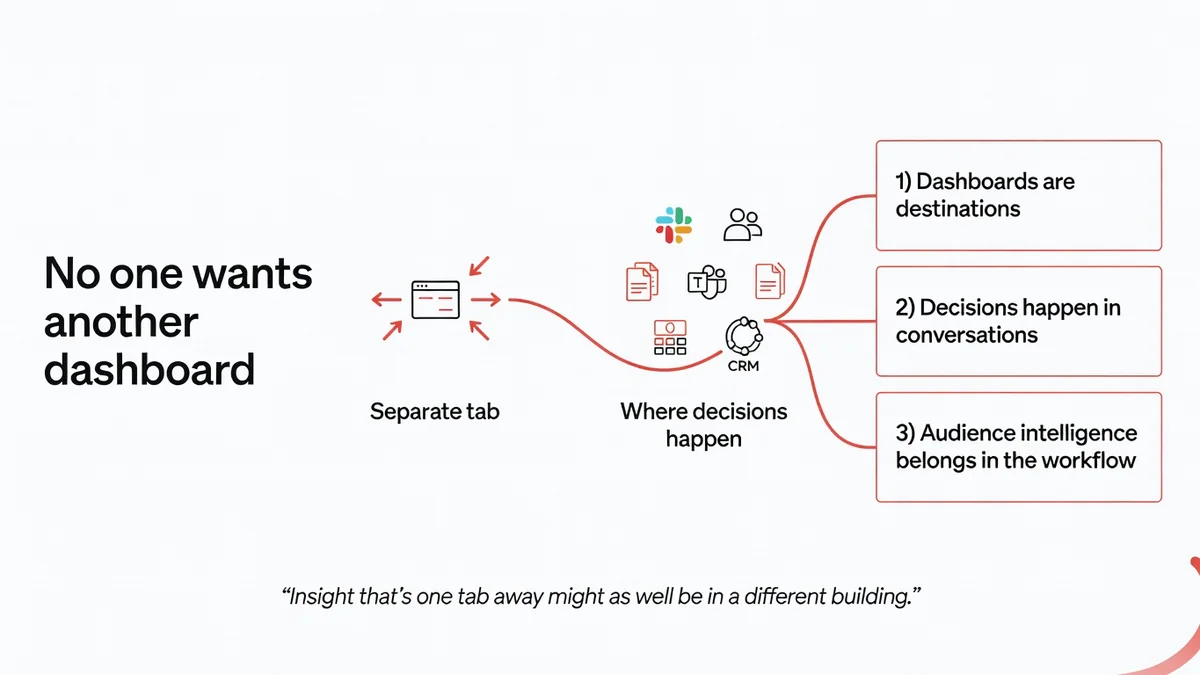

Building another one isn't the answer. The dashboard isn't the problem. The decision-making conversation is.

A dashboard isn't a workflow

Every research and intelligence vendor for the last fifteen years has reached for the same instinct: build a dashboard. The pitch is always some version of: your team will have one place to see the data.

The pitch is true. It's also useless, because one place to see the data doesn't describe how teams make decisions.

Teams make decisions in conversation. In standups. In sprint planning. In briefing meetings. In brand reviews. In Slack threads at 4pm on a Thursday. In a doc someone is drafting for Monday's strategy session. In the Teams call where someone asks do we know what the audience thinks about X? and someone else has to say let me check and get back to you.

By the time anyone gets back to anyone, the conversation has moved on.

The dashboard isn't where the decision happens. The conversation is. A research output that requires a separate tab, a separate login, and a separate cognitive context is structurally late to the discussion that matters. The buyer who said no one wants another dashboard wasn't being lazy. They were describing a real friction.

Where work actually happens

The systems where modern enterprise work happens aren't insights tools. They're the productivity surfaces the team already lives in.

Slack and Teams, where the day-to-day conversation runs. Documents and decks, where decisions get drafted, debated, and finalised. CRM and project tools, where customer-facing work gets coordinated. Internal wikis and knowledge bases, where context is preserved between meetings. The browser tab the team has open right now, whatever it is.

Research outputs that show up in those surfaces get used. Research outputs that require leaving those surfaces get ignored, even when the underlying work is excellent.

That isn't a UX preference. It's a cognitive economics problem. The cost of switching contexts is real. Every additional tool the team has to log into is a friction tax on whether the insight gets consulted at all. Insight that's one tab away might as well be in a different building.

What insight in the workflow looks like

Picture a strategy team working through a market-entry brief in a shared doc. Someone asks the question every market-entry brief produces: what does the audience in this market actually think about this category?

In the dashboard model, somebody opens a tab, runs a query, exports the result, screenshots a chart, pastes it into the doc, and writes a caption. Twelve minutes of context-switching for a paragraph of insight. Most teams don't do this. They write the paragraph from memory and instinct, and the audience input never enters the doc.

In the embedded model, the question gets asked of an audience-intelligence agent inside the doc. The answer lands as a written read in the next paragraph. The team challenges it, refines it, keeps writing. The audience signal becomes part of the draft instead of a screenshot pasted next to it.

Same shape in Slack. Someone in #brand-reviews asks are we wrong about how 35-44 women in tier-two metros react to the new pack design? In the dashboard model, the question dies in the thread or somebody promises to look into it. In the embedded model, an agent in the channel returns a synthetic-audience read in the same conversation, with caveats, and the brand team has something to argue with by lunch.

Or on a sales call. A consultant is in discovery with a client, and the client raises a question about regional consumer sentiment the consultant can't answer. In the dashboard model, the consultant promises to follow up. In the embedded model, the consultant asks the question of an audience agent during the call and brings the read back into the conversation in real time.

None of those examples are about the dashboard being prettier. They're about the insight arriving in the conversation where the decision is being made, on the timeline the conversation is moving at.

The shift is structural, not cosmetic

Tempting to read this as a minor UX argument. It isn't. It's a structural argument about where intelligence belongs in an enterprise.

The first wave of enterprise software gave teams better tools to do specific tasks. The second wave gave teams dashboards to monitor what the tools were doing. The third wave, the one that matters now, is about removing the tool surface altogether and putting the intelligence inside the conversation.

Audience research will follow the same arc. The endpoint isn't better dashboards. The endpoint is audience intelligence that lives inside Slack, Teams, docs, decks, sales tools, and the surfaces where the team is already working. The team doesn't log into an audience-intelligence tool. The audience intelligence shows up in the workflow.

That's also why the partner and platform conversation matters here. Many of the most natural homes for synthetic audience intelligence aren't standalone tools. They're existing platforms, advisory workflows, and consulting engagements where the intelligence becomes a layer underneath the delivery model the client already trusts. The buyer doesn't see another vendor. The buyer sees a sharper version of the workflow they already use.

For consultancies and platforms, that turns audience intelligence from a separate service line into a capability they can run inside their existing offer. The economic shape of the relationship changes. The client doesn't have to evaluate a new tool. The partner doesn't have to retrain the buyer. The intelligence shows up where the work already happens.

Where to start

The practical version is small.

Pick one decision-making surface where research questions come up and never get answered fast enough: a Slack channel, a recurring doc, a sales discovery flow, a weekly review meeting. Wire one audience-intelligence capability into that surface. Watch what happens to how the conversation runs over the next month.

If the team starts asking more questions because the cost of asking dropped, the case is made. If the questions get answered well enough to change how decisions get drafted, the case is strongly made. If neither happens, the team has learned something useful about which surface actually drives decisions, and the next experiment can target that one.

The future of synthetic research isn't a better dashboard. It's a thinking partner inside the workflow, present in the conversation where decisions are made.

No one wants another dashboard unless it changes a decision. Build the version that does.